Ocean Network launches premium NVIDIA H200 GPU access

Ocean Network has made NVIDIA H200 GPUs available through their dashboard at $2.16 per hour. The service includes:

- Pay-per-use pricing with escrow-protected payments

- Free compute credits for testing

- Remote execution on global nodes

- Direct IDE integration via Ocean Orchestrator

The platform addresses common cloud computing pain points by charging only for actual execution time. If a node fails, no charges apply. If code fails, users pay only for compute actually used.

Key features include:

- Pre-qualified, benchmarked nodes

- Containerized job execution

- Real-time monitoring and logs

- Fast recovery when nodes go offline

Users can launch AI workloads directly from VS Code, Cursor, Antigravity, or Windsurf editors without managing infrastructure or dealing with idle billing.

Demand for compute keeps climbing. The annoying part is still the workflow Ocean Network is what we are building to make pay-per-use compute jobs feel simple across a P2P network of nodes, without the need for babysitting infrastructure Currently, you can experiment by using

Compute Nodes are operated by humans, organizations, and incentives acting independently A network exists only when coordination aligns these actors around shared standards, so users can pick reliable resources and get predictable execution Without orchestration, nodes operate

Premium @nvidia H200s are now available on the Ocean Network (@ONcompute) dashboard starting from $2.16/hr⚡️ Explore GPU specs, test with free compute, and run AI workloads on remote global nodes with pay-per-use, escrow protected payments Try it here👇 dashboard.oncompute.ai

Building code, generating images, and writing content now take just a few clicks Compute is no different. In minutes, you can access remote GPUs from across the globe to power your AI and ML workloads It all starts right inside your IDE with a simple extension install Learn

Peer-to-Peer (P2P) Compute Networks 101 Peer-to-peer compute networks let independent machines share CPU and GPU capacity. Jobs get matched to peers, run in isolated environments, and only results come back Ocean Network is moving toward that model using Ocean Nodes plus Ocean

What happens to your AI job if a GPU node crashes halfway through? In decentralized computing, failures can happen. The real question is whether the system handles them predictably Ocean Network is being built with this in mind: 1. Jobs run in isolated containers, so

Decentralized compute has always had one weak spot: nodes fail, and your jobs go down with them. In a real P2P network, machines drop, connections break, and hardware isn’t standardized. That’s why most “rental GPU” platforms quietly drain time through retries, failed runs, and

Scaling workloads on decentralized infrastructure is becoming easier. Soon, with Ocean Network, you’ll be able to scale compute through: 1. Parallel job execution Run multiple containerized workloads simultaneously across distributed compute environments to increase throughput

GPU sprawl slowing down your AI workflow? AI doesn’t scale if your compute sits idle. And managing infrastructure shouldn’t feel like a second job. Ocean Network (@ONcompute) fixes this: 1. Tap into a global pool of GPUs straight from your IDE via Ocean Orchestrator. 2. Pay

Decentralized computing only works when coordination is built in Ocean Network orchestration turns independent GPU and CPU providers into a usable compute network When submitting a job, the orchestration layer handles matching, permissions, execution, and returning results

Last alpha stretch before Beta launch Builders have already run 700+ compute jobs across H200s, T4s, and 1060s on Ocean Network On March 16, users can launch AI workloads from their IDE on globally coordinated GPUs. Pure automatiON is almost here!👇

The Ocean Network Beta is almost here, and it’s about to change the way developers run AI workloads. Since last week, our Alpha cohort has stress-tested the network with real workloads, running over 731 jobs so far across NVIDIA H200s, 1060s, and Tesla T4s. Starting March 16,

Stop scrolling here Many developers waste hours stitching together tools just to get work done The Ocean VS Code Extension keeps the entire workflow in one place: rent compute, attach data and algorithms, and monitor execution directly from your editor. Built with data privacy

Ocean Network (@ONcompute) just bridged the gap between your IDE and global NVIDIA H200s starting from $2.16/hr, setting the new standard for permissionless AI infrastructure Go claim $100 worth of complimentary credits and start building⚡️

Last week, during our public beta launch, we gave you access to Ocean Network (ON), a tool that connects global GPUs to your AI workloads. Now let us show how you can go from code-to-node in a few clicks, and access @nvidia GPUs for as low as $2.16/hr Psst… We have a gift for

GPUs are becoming long-term infrastructure assets as AI workloads continue to scale Most GPUs today are either underutilized or locked inside isolated environments. Decentralized compute networks aim to change that by allowing GPU owners to contribute capacity to shared,

The vision for decentralized compute is becoming more and more of a reality. Huge congratulations to the Ocean Network team on officially flipping the switch ON their Alpha! They are bringing frictionless, P2P compute straight to your IDE. Check out how they are building the

Ocean Network Alpha is officially ON ⚡! Our exclusive cohort of chosen ONes can now run their FREE AI and data workloads on our P2P compute network, without the headache of managing complex infrastructure. (Psst… if you’re in the cohort, you might want to check your inbox

Building an AI model is easier than ever, until you’re paying for Idle GPUs. You hit a bug, pause to debug, maybe step away, but your instance keeps running in the background, burning money with zero progress. That’s the hidden “tax on thinking” most developers just accept.

Ocean Nodes bring decentralized computing with features designed for scaling AI/ML workloads Builders will get access to geographically distributed compute to train, fine-tune, and run models without relying on centralized cloud providers Plus, with Ocean C2D, your data and

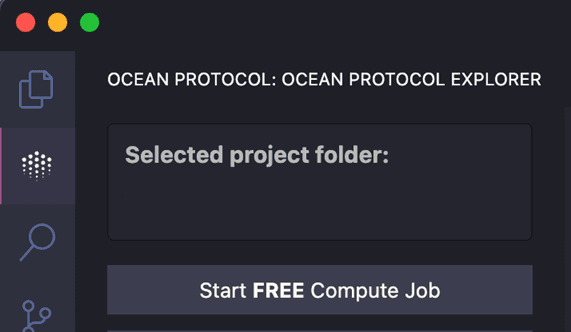

Get your ML workflow running in three steps, directly from VS Code: 1. Install the Ocean VS Code extension: Bring Ocean orchestration capabilities directly into your development environment 2. Configure your job: Specify the dataset ID, attach your training script, and select

And just like that, it’s here. Ocean Network public Beta is live ⚡️ - Run AI jobs on global GPUs - Pay-per-use mechanism - Streamlined workflow Your first compute job is waiting. Start it today with pure automatiON in Ocean Orchestrator 👇

Ocean Network Beta is officially ON ⚡️ This is the moment we've been building toward: Run AI workloads on pay-per-use NVIDIA H200s as low as $2.16/GPU hour, straight from your IDE with a one-click code-to-node workflow. Head on to oncompute.ai to claim your $100

Access to GPUs is changing It’s no longer about searching marketplaces, onboarding vendors, and dealing with operational overhead in the middle of your workflow With Ocean Network, compute soon becomes a pay-per-use building block: 1. Integrate geographically distributed GPU

Ocean Network is moving fast. 361 compute jobs in the first 48 hours of Alpha, with the cohort stress testing real workloads end-to-end. Beta opens March 16 and expands access. If you want to run GPU compute from your IDE, keep an eye ON 👇

48 hours since Alpha switched ON. ⚡️ 361 compute jobs already executed. Our exclusive cohort is actively stress-testing decentralized compute, running real workloads without managing a single piece of infrastructure. On March 16, the gates open for the public Beta. Get ready

Unpopular opinion: If running a compute job still means bouncing between dashboards, terminals, and way too many tabs, the workflow is broken. Ocean Orchestrator brings containerized GPU compute jobs into your IDE, powered by Ocean Network (@ONcompute). Learn more👇

The Protocol built the foundation Now the Network is turning it ON⚡️ Ocean Network (@ONcompute) and Ocean Orchestrator are making it easier to run containerized AI workloads without the usual cloud setup and idle billing drag Pick the resources you need Launch from your IDE Get

Ocean Network Offers Pay-Per-Use GPU Access for Llama 4

Ocean Network now provides on-demand GPU access for running Llama 4 through a straightforward workflow: - Select GPU resources via Ocean Network Dashboard - Pay only for actual execution time using escrow-secured payments - Integrate directly into your IDE through Ocean Orchestrator The platform addresses common infrastructure challenges in AI development, including idle enterprise GPU capacity and limited developer access to compute resources. Users can browse available compute by specifications and duration, with NVIDIA H200 GPUs available starting at $2.16/hr. [Get started with Ocean Orchestrator](https://docs.oncompute.ai/ocean-orchestrator/using-ocean-orchestrator-with-ocean-dashboard)

Lunor AI Launches $1,500 Travel Data Annotation Challenge

**Lunor AI** has launched the **TripFit Tags** data annotation challenge, running until March 10 with a prize pool of **1,500 USDC**. **How it works:** - Read travel listings and review their details - Categorize each listing by traveler type: Solo, Couple, Family, or Group - Help train smarter travel search systems The challenge offers straightforward tasks that contribute to improving travel recommendation algorithms. Participants label data that will enhance how travel platforms match listings to user preferences. [Learn more about the challenge](https://twitter.com/lunor_ai)

Ocean Nodes Launch Decentralized GPU Network for AI Training

Ocean Protocol has launched Ocean Nodes, a decentralized computing infrastructure designed for AI and machine learning workloads. **Key features:** - Builders can access geographically distributed compute to train, fine-tune, and run models without centralized cloud providers - Ocean C2D (Compute-to-Data) keeps data and algorithms sealed inside containers—compute executes remotely, only outputs are returned - GPU owners can monetize idle hardware by contributing capacity to the network - Jobs run in isolated, containerized environments The infrastructure aims to create a sovereign compute layer for AI that is open and distributed, addressing the growing demand for GPU resources as AI workloads scale. [Learn more about Ocean Nodes](https://docs.oceanprotocol.com/developers/ocean-node)

Ocean Protocol Adds Free Compute Feature to VS Code Extension to Prevent Image Generation Scaling Issues

Ocean Protocol has introduced a **Free Compute feature** to their VS Code extension to address image generation failures during rapid scaling. The new feature allows users to: - Start with small, fixed-seed baselines - Lock their settings for consistency - Save both configurations and outputs - Maintain stable, repeatable runs This approach helps developers **experiment and scale at their own pace** while avoiding common pitfalls that occur when scaling too quickly. Users receive **7,200 seconds of free compute time** to test the feature and get started with their projects. The extension continues Ocean's mission to provide seamless AI development tools that combine compute power, privacy, and algorithms directly within developers' preferred IDE environment.