The Graph is launching GRC-20, web3's first knowledge graph standard. Knowledge graphs function as digital maps that show relationships between information pieces and adapt as data evolves.

Key aspects:

- Creates a common language for web3 data organization

- Enables building customized applications on shared data

- Supports true interoperability across platforms

Learn more at the Builders Office Hours with @yanivgraph on Thursday, December 5 at 5PM UTC via @GraphDevs livestream.

Knowledge graphs are changing how AI understands data! The GRC-20 spec from @geoprotocol introduces web3-native standards for organizing information, making it more accessible & reliable for AI applications ☑️ ✨ Shaping the future of decentralized knowledge

✨ The Graph is pioneering a new data standard for web3 with GRC-20, a proposed common language for data across web3. Just as ERC-20 standardized value on Ethereum, GRC-20 will standardize data, information & knowledge and bring web3 to life 🌐 thegraph.com/blog/grc20-kno…

$GRT powers web3's flourishing data ecosystem. It incentivizes high performance and collaboration across The Graph network, with all participants working toward a shared goal of accessible, low-cost and decentralized blockchain data. Here's how: thegraph.com/blog/grt-the-g…

The vision for a Semantic Web was limited by web2’s centralized infrastructure and lack of process for agreeing on shared schemas in data standards. Enter GRC-20: a web3-native data standard that makes truly open, composable knowledge sharing possible 🌐 Read the Forum and join

📢 ICYMI: @geoprotocol has introduced GRC-20 - the new data standard for web3! ✔️ Flexible knowledge graphs 🔄 True interoperability 🌐 Unlimited composability Learn how GRC-20 is shaping the future of decentralized data 🔽

✨ The Graph is pioneering a new data standard for web3 with GRC-20, a proposed common language for data across web3. Just as ERC-20 standardized value on Ethereum, GRC-20 will standardize data, information & knowledge and bring web3 to life 🌐 thegraph.com/blog/grc20-kno…

🌐 GRC-20 isn’t just for web3—it's for the entire knowledge economy. Imagine the worlds of healthcare, news, media, and politics benefiting from interconnected data. It’s time to standardize knowledge with GRC-20.

✨ The Graph is pioneering a new data standard for web3 with GRC-20, a proposed common language for data across web3. Just as ERC-20 standardized value on Ethereum, GRC-20 will standardize data, information & knowledge and bring web3 to life 🌐 thegraph.com/blog/grc20-kno…

✨ The Graph is pioneering a new data standard for web3 with GRC-20, a proposed common language for data across web3. Just as ERC-20 standardized value on Ethereum, GRC-20 will standardize data, information & knowledge and bring web3 to life 🌐 thegraph.com/blog/grc20-kno…

Agent0 Subgraphs Now Live Across Five Mainnets with Unified GraphQL Schema

**Agent0 Subgraphs are now operational across five major blockchain networks** - Base, BNB Chain, Ethereum, Monad, and Polygon - using a single GraphQL schema. **Key features:** - Real-time indexing of every ERC-8004 agent across all chains - No code rewrites needed when switching between networks - Already processed over 1 million queries - Accessible via [Graph Explorer](https://thegraph.com/explorer?search=agent0) **What this enables:** - Developers can query agent data with a single GraphQL request instead of scanning thousands of blocks - AI agents can discover, hire, and interact with each other using structured blockchain data - Machine-scale data access for agents that operate continuously The infrastructure indexes agent identities, capabilities, reputation, and validation data in milliseconds. Developers building ERC-8004 agents can now access comprehensive onchain data without building custom indexers.

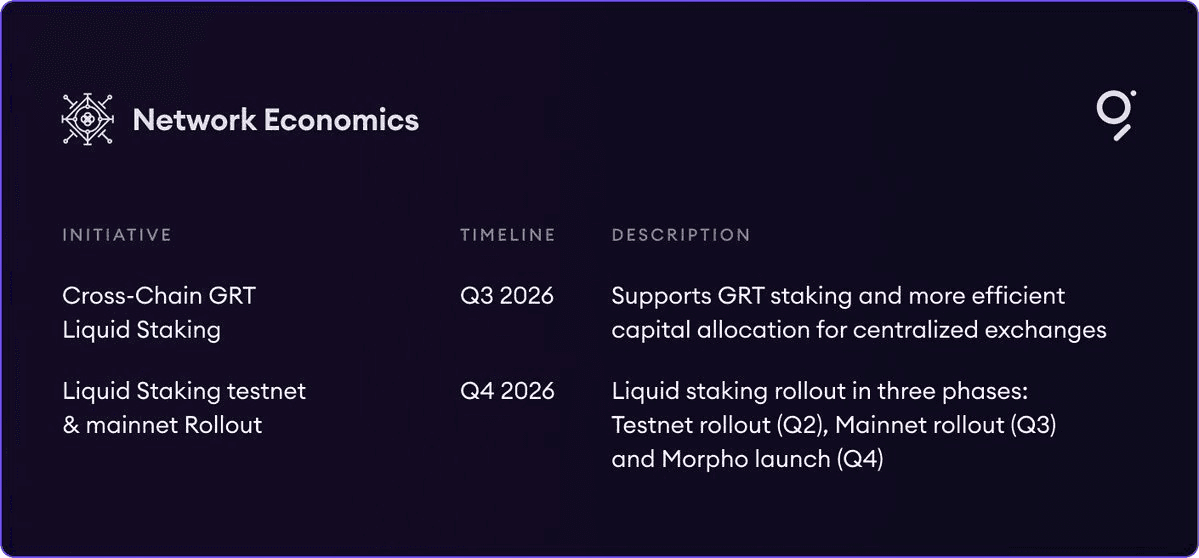

🔧 The Graph Overhauls Economics

The Graph Network is rolling out **major economic upgrades** in 2026 that fundamentally reshape how the protocol operates. **Key Changes:** - **REO (Rewards Engine Optimization)**: Indexer rewards now tied to actual work performed, not just passive staking - **DIPs (Direct Indexer Payments)**: Consumers can pay Indexers directly for specific data services - **Liquid staking**: Lowers barriers for centralized exchanges to participate in $GRT delegation These changes aim to create **better incentive alignment** across the network and build a more sustainable economic model for the protocol's data services infrastructure.

The Graph Gateway Enables Direct USDC Payments for Subgraph Queries

The Graph Gateway has integrated x402 payment support, allowing developers to query any Subgraph using USDC on Base without requiring API keys. **Key Features:** - Direct USDC payments on Base network - No API key management needed - Simple npm package installation: `@graphprotocol/client-x402` This update streamlines the payment process for accessing blockchain data through The Graph's infrastructure, removing traditional authentication barriers. [Documentation available here](https://thegraph.com/docs/en/subgraphs/guides/x402-payments/)

🔗 One Query Unlocks 60 DeFi Protocols

A community builder solved DeFi's data fragmentation problem by connecting Messari's standardized Subgraphs with an MCP server on The Graph network. **The Challenge:** - Each lending protocol used different data formats - Required 40+ custom adapters to access protocol data - No unified way to query across chains **The Solution:** - Combined standardized Subgraphs with MCP server technology - Created single query access to 60 lending protocols - Spans 15 different blockchain networks [Read the full technical breakdown](https://thegraph.com/blog/community-builder-queried-defi-lending-protocols-subgraphs-mcp/)

The Graph's 2026 Roadmap Tackles Unsolved Blockchain Data Challenges

The Graph Protocol has released its 2026 roadmap, addressing persistent challenges in blockchain data infrastructure that remain unsolved despite common assumptions. The protocol acknowledges that blockchain data problems require layered solutions rather than single fixes. Their roadmap outlines a comprehensive approach to organizing and accessing blockchain data more effectively. **Key Points:** - Blockchain data infrastructure faces ongoing technical challenges - Solutions require a multi-layered stack approach - The Graph's 2026 roadmap provides detailed breakdown of problems and proposed fixes Full technical details available in [The Graph's announcement](https://x.com/graphprotocol/status/2042226022964224301?s=20).