Ocean Network has officially launched its Alpha, bringing peer-to-peer compute directly into development environments.

What's New:

- Frictionless P2P compute accessible straight from your IDE

- Next-generation orchestration layer now live

- Builds on Ocean's existing VS Code extension capabilities

How It Works: The network connects independent GPU and CPU providers into a usable compute infrastructure. Jobs run in isolated containers through Ocean Compute-to-Data (C2D), with only results returned to users.

Key Features:

- Pay-per-use model with Web3 wallet authentication

- No idle billing - pay only when jobs run

- Geographically distributed resources

- Privacy-preserving containerized execution

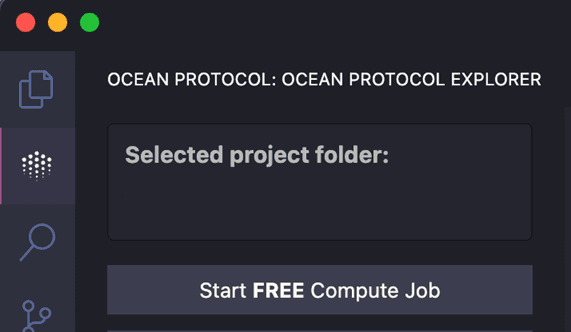

Developers can access the system through VS Code, Cursor, Antigravity, or Windsurf editors using the Ocean VS Code extension.

Demand for compute keeps climbing. The annoying part is still the workflow Ocean Network is what we are building to make pay-per-use compute jobs feel simple across a P2P network of nodes, without the need for babysitting infrastructure Currently, you can experiment by using

Compute Nodes are operated by humans, organizations, and incentives acting independently A network exists only when coordination aligns these actors around shared standards, so users can pick reliable resources and get predictable execution Without orchestration, nodes operate

Building code, generating images, and writing content now take just a few clicks Compute is no different. In minutes, you can access remote GPUs from across the globe to power your AI and ML workloads It all starts right inside your IDE with a simple extension install Learn

Peer-to-Peer (P2P) Compute Networks 101 Peer-to-peer compute networks let independent machines share CPU and GPU capacity. Jobs get matched to peers, run in isolated environments, and only results come back Ocean Network is moving toward that model using Ocean Nodes plus Ocean

What happens to your AI job if a GPU node crashes halfway through? In decentralized computing, failures can happen. The real question is whether the system handles them predictably Ocean Network is being built with this in mind: 1. Jobs run in isolated containers, so

Scaling workloads on decentralized infrastructure is becoming easier. Soon, with Ocean Network, you’ll be able to scale compute through: 1. Parallel job execution Run multiple containerized workloads simultaneously across distributed compute environments to increase throughput

Decentralized computing only works when coordination is built in Ocean Network orchestration turns independent GPU and CPU providers into a usable compute network When submitting a job, the orchestration layer handles matching, permissions, execution, and returning results

Stop scrolling here Many developers waste hours stitching together tools just to get work done The Ocean VS Code Extension keeps the entire workflow in one place: rent compute, attach data and algorithms, and monitor execution directly from your editor. Built with data privacy

GPUs are becoming long-term infrastructure assets as AI workloads continue to scale Most GPUs today are either underutilized or locked inside isolated environments. Decentralized compute networks aim to change that by allowing GPU owners to contribute capacity to shared,

The vision for decentralized compute is becoming more and more of a reality. Huge congratulations to the Ocean Network team on officially flipping the switch ON their Alpha! They are bringing frictionless, P2P compute straight to your IDE. Check out how they are building the

Ocean Network Alpha is officially ON ⚡! Our exclusive cohort of chosen ONes can now run their FREE AI and data workloads on our P2P compute network, without the headache of managing complex infrastructure. (Psst… if you’re in the cohort, you might want to check your inbox

Ocean Nodes bring decentralized computing with features designed for scaling AI/ML workloads Builders will get access to geographically distributed compute to train, fine-tune, and run models without relying on centralized cloud providers Plus, with Ocean C2D, your data and

Get your ML workflow running in three steps, directly from VS Code: 1. Install the Ocean VS Code extension: Bring Ocean orchestration capabilities directly into your development environment 2. Configure your job: Specify the dataset ID, attach your training script, and select

Access to GPUs is changing It’s no longer about searching marketplaces, onboarding vendors, and dealing with operational overhead in the middle of your workflow With Ocean Network, compute soon becomes a pay-per-use building block: 1. Integrate geographically distributed GPU

Lunor AI Launches $1,500 Travel Data Annotation Challenge

**Lunor AI** has launched the **TripFit Tags** data annotation challenge, running until March 10 with a prize pool of **1,500 USDC**. **How it works:** - Read travel listings and review their details - Categorize each listing by traveler type: Solo, Couple, Family, or Group - Help train smarter travel search systems The challenge offers straightforward tasks that contribute to improving travel recommendation algorithms. Participants label data that will enhance how travel platforms match listings to user preferences. [Learn more about the challenge](https://twitter.com/lunor_ai)

Ocean Nodes Launch Decentralized GPU Network for AI Training

Ocean Protocol has launched Ocean Nodes, a decentralized computing infrastructure designed for AI and machine learning workloads. **Key features:** - Builders can access geographically distributed compute to train, fine-tune, and run models without centralized cloud providers - Ocean C2D (Compute-to-Data) keeps data and algorithms sealed inside containers—compute executes remotely, only outputs are returned - GPU owners can monetize idle hardware by contributing capacity to the network - Jobs run in isolated, containerized environments The infrastructure aims to create a sovereign compute layer for AI that is open and distributed, addressing the growing demand for GPU resources as AI workloads scale. [Learn more about Ocean Nodes](https://docs.oceanprotocol.com/developers/ocean-node)

Ocean Protocol Adds Free Compute Feature to VS Code Extension to Prevent Image Generation Scaling Issues

Ocean Protocol has introduced a **Free Compute feature** to their VS Code extension to address image generation failures during rapid scaling. The new feature allows users to: - Start with small, fixed-seed baselines - Lock their settings for consistency - Save both configurations and outputs - Maintain stable, repeatable runs This approach helps developers **experiment and scale at their own pace** while avoiding common pitfalls that occur when scaling too quickly. Users receive **7,200 seconds of free compute time** to test the feature and get started with their projects. The extension continues Ocean's mission to provide seamless AI development tools that combine compute power, privacy, and algorithms directly within developers' preferred IDE environment.

🏛️ European Parliament Speeches Decoded

**CivicLens annotation challenge completed** with 206 contributors analyzing European Parliament speeches for political discourse patterns. **Key achievements:** - Labeled speeches for stance, claims, tone, topic, and ideology - Partnership with @lunor_ai delivered high-quality political data - Results provide insights into political discourse analysis The challenge focused on creating datasets to help AI models detect market-moving political statements more effectively. [Read full details](https://blog.oceanprotocol.com/annotators-hub-civiclens-turning-speeches-into-signals-e27041c3972c)