ETH Denver 2026 wrapped with a clear trend: AI agents dominated the conversation, but a critical infrastructure gap emerged.

The Problem:

- Everyone is building AI agents

- Nobody is building the identity layer these agents require

- This creates a fundamental infrastructure challenge for the ecosystem

Why It Matters: As AI agents become mainstream in crypto, the lack of robust identity and reputation systems could bottleneck adoption and functionality. Without proper identity infrastructure, AI agents can't establish trust, verify credentials, or interact securely across protocols.

The gap between innovation and infrastructure is widening.

ETH Denver wrapped. The main thing I heard? Everyone's building AI agents. Nobody's building the identity layer they need.

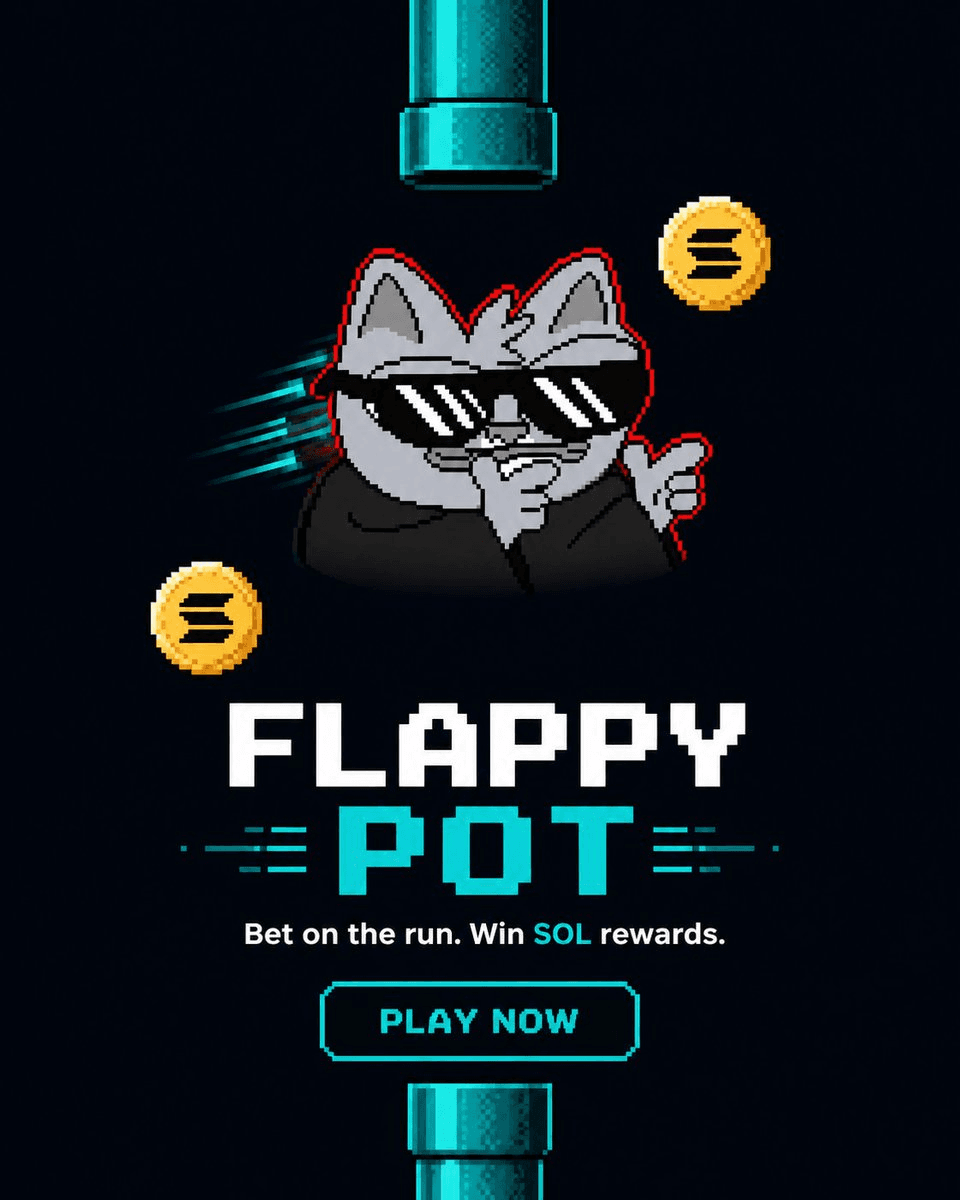

Mercle Launches Flappy Pot Game with SOL Betting

Mercle has released **Flappy Pot**, a new game featuring SOL betting functionality. **Key Features:** - Play-to-earn mechanics with SOL wagering - Human-only verification to prevent bot participation - Available on both App Store and Play Store The game follows Mercle's previous release of on-chain chess betting on Solana Mobile's Seeker device. Both games utilize face verification to ensure only real players participate in the betting pools. Users need to update their Mercle app to access the new game.

🤖 The Dead Internet

The internet is drowning in AI-generated content. Bots are replying to other bots, and AI agents are engaging with each other in an endless loop of synthetic interactions. Every social media feed is now flooded with machine-generated posts, making it increasingly difficult to distinguish between human and artificial content. **The new premium signal**: Verified human identity. As the "dead internet theory" becomes reality, authentic human presence and interaction are emerging as the scarcest and most valuable commodities online.

Active Attestation Establishes Chain of Custody for Agent Actions

**Active attestation** introduces a continuous chain of custody model where every autonomous agent action remains traceable to a verified human who holds accountability. Unlike static verification methods that only capture a single point in time, active attestation addresses the reality of distributed control in agent-driven systems. As autonomous transactions occur at machine speed, this approach ensures ongoing accountability rather than just initial authorization. The key distinction: active attestation answers not only "who started this" but critically, **"who is responsible for what happens next"** as agents execute tasks independently. This framework acknowledges that in autonomous systems, control is distributed across multiple agents and transactions, requiring a verification method that maintains accountability throughout the entire process rather than relying on one-time snapshots.

Why Static Proof Fails in Agent-Driven Economies

**The shift from human to machine control is breaking traditional verification models.** In autonomous systems, transactions occur at machine speed with distributed control across multiple agents. The problem: **one-time verification snapshots can't maintain accountability** in this continuous, fast-moving environment. **Key challenge:** - Traditional model: verify once, use forever - Agent economy reality: requires continuous verification - Static proof assumes humans maintain control - Autonomous transactions need real-time accountability The fundamental issue is that **static verification methods weren't designed for systems where control is distributed** among autonomous agents operating independently. As machines execute transactions without human intervention, the gap between verification and action creates accountability blind spots. This represents a critical infrastructure challenge for web3 systems integrating AI agents and autonomous operations.

Why One-Time Verification Fails in the Agent Economy

The shift from **static to continuous verification** is reshaping how we think about proof in autonomous systems. **The fundamental problem:** - Traditional verification assumes humans remain in control - Agent-driven systems operate at machine speed with distributed control - One-time verification snapshots can't capture ongoing accountability **Why this matters:** As autonomous agents execute transactions independently, the old "verify once, use forever" model becomes obsolete. Continuous verification becomes essential when control is no longer centralized with humans. The agent economy demands real-time accountability mechanisms that can keep pace with automated decision-making.