A former product manager turned visual artist created a live AI visual performance system in just three weeks using DaydreamLiveAI Scope on Livepeer Network.

Key Details:

- Artist @aeyestudio transitioned to full-time visual art in September

- Performed at a top San Francisco venue with AI visuals synced to music

- Displayed across a wall of old CRT televisions

- Got the system working at 2am the night before the show

Technical Foundation:

- Built using DaydreamLiveAI Scope

- Powered by Livepeer's open video infrastructure

- Daydream is open source and community-driven

The project demonstrates the creative possibilities of real-time video AI technology and what builders are creating on decentralized video infrastructure.

.@aeyestudio switched from product manager to full-time visual artist in September. Recently he performed at one of San Francisco's top venues where he displayed live AI visuals synced to music, played across a wall of old CRT televisions. He built the whole thing in 3 weeks

Grove Solves Real-Time AI Video Latency with Distributed GPU Network

**The Challenge**: Real-time AI video generation requires sub-100ms response times, making geographic proximity critical. Sending requests across continents creates unacceptable delays. **Grove's Solution**: A distributed GPU coordination system that matches users with nearby processing power: - New York users → New York GPUs - Singapore users → Singapore GPUs - Centralized coordination across multiple locations **Why It Matters**: Applications like live webcam transformations, responsive game environments, and real-time sports analysis demand instant processing that traditional centralized GPU clouds can't deliver. Grove bridges the gap between local processing speed and global coordination needs.

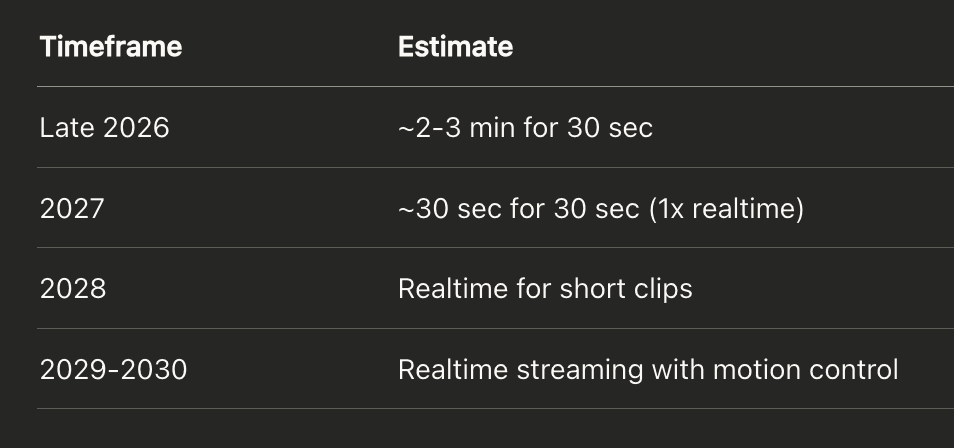

Real-Time AI Video Emerges as New Category Beyond Static Generation

Real-time AI video represents a fundamental shift from traditional prompt-based video generation. While static AI video tools produce content from prompts, real-time AI video acts as an interactive collaborator, responding live to users. **Key distinctions:** - Static generation: A tool that creates video from prompts - Real-time AI: An interactive collaborator that responds instantly - Processing requirement: Every frame must render faster than a blink to maintain functionality Daydream Live AI demonstrates this technology with live avatars running on Livepeer infrastructure. The technical challenge is significant - unlike image AI which can afford 2-second processing delays, live video streams require instantaneous frame-by-frame processing to avoid breaking the user experience. This emerging category is only now becoming technically feasible, opening new possibilities for interactive AI applications.

Livepeer Launches Real-Time AI Video at 50-80% Lower Cost Than Cloud Providers

Livepeer has launched real-time AI video processing capabilities, offering significant cost savings of 50-80% compared to traditional cloud services. **Key Features:** - Real-time video-to-video AI processing now available - Built on decentralized infrastructure - Open source and community-powered **Try It Now:** Users can test the technology through [Daydream Live](https://daydream.live), an application built on Livepeer's infrastructure that demonstrates real-time video AI capabilities. The launch represents a practical application of decentralized video infrastructure, making AI video processing more accessible through lower costs while maintaining real-time performance.

Livepeer Releases LISAR SPE Update with Enhanced Community Node Features

Livepeer has released new **LISAR SPE release notes** following the successful LiveInfra SPE proposal that passed in July. The update enhances the **Community Arbitrum Node**, providing developers with: - Free, reliable RPC access - Improved uptime and redundancy - Better overall performance This development strengthens Livepeer's commitment to **public goods** and improving the developer experience for builders in the ecosystem. [Read the full release notes](https://forum.livepeer.org/t/lisar-spe-release-notes/3159)